Lesson Overview

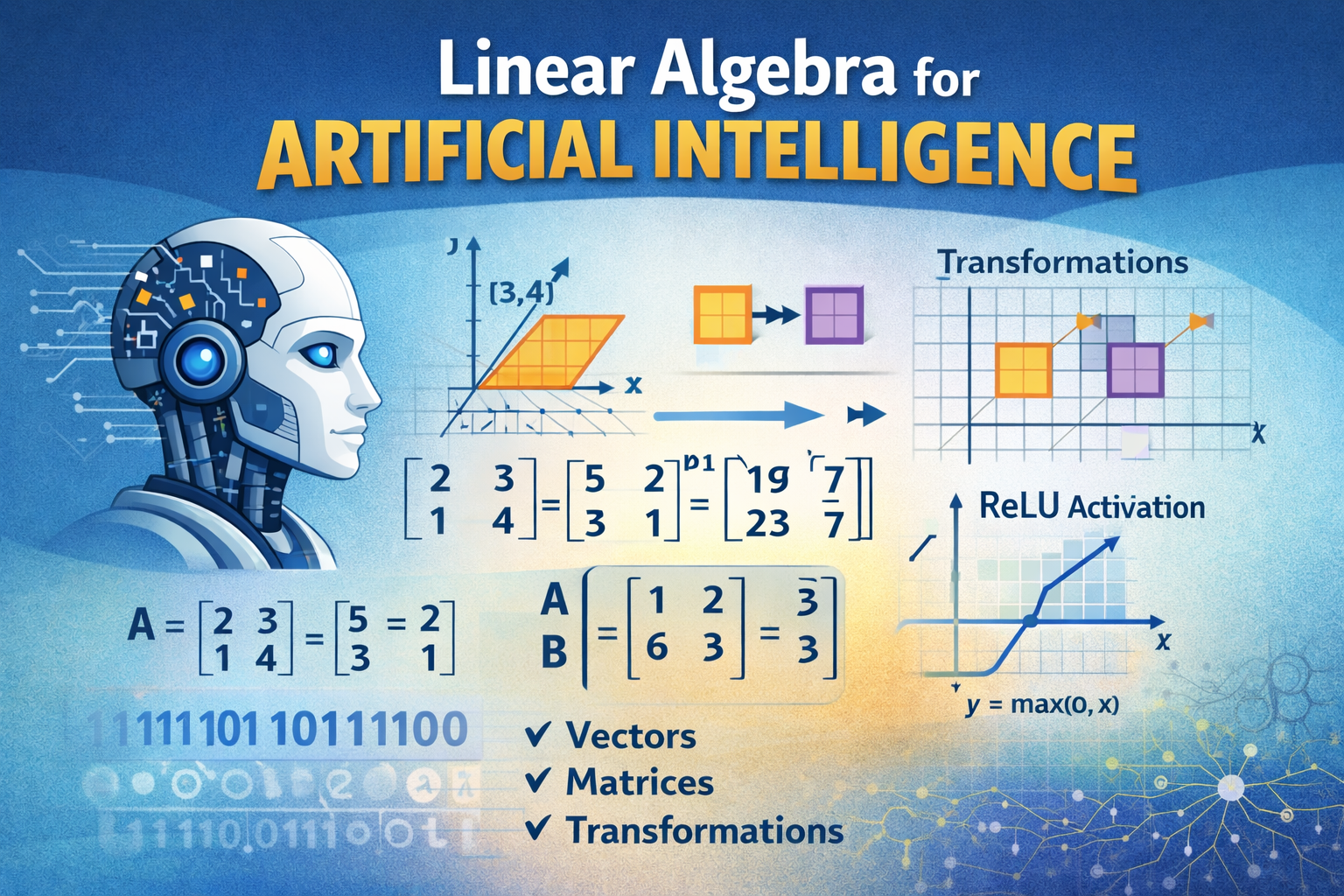

Linear algebra is one of the most important mathematical foundations used in Artificial Intelligence, Machine Learning, and Deep Learning. Many AI systems rely on mathematical structures such as vectors, matrices, and linear transformations to represent data and perform complex computations. These concepts allow machines to process large amounts of information efficiently and identify patterns within datasets.

In AI applications, linear algebra helps computers perform operations such as image recognition, speech processing, recommendation systems, and predictive modelling. Neural networks, which are the backbone of modern AI systems, rely heavily on matrix multiplication and vector calculations to process data and adjust model parameters during training.

1. What is Linear Algebra?

Linear algebra is a branch of mathematics that focuses on vectors, matrices, and linear transformations. These mathematical structures help represent and manipulate data in multi-dimensional spaces.

In artificial intelligence, linear algebra is used to:

- Represent datasets as numerical structures

- Transform data for machine learning models

- Perform calculations in neural networks

- Optimize algorithms used in AI systems

Because AI systems process large datasets, linear algebra provides the mathematical tools needed to perform efficient calculations.

2. Vectors

A vector is a mathematical object that has both magnitude (size) and direction. Geometrically, vectors can be represented as arrows pointing from one point to another.

For example:

v = (3, 4)

This vector represents movement of 3 units along the x-axis and 4 units along the y-axis.

Vectors are widely used in AI to represent:

- Data points in machine learning

- Word representations in natural language processing

- Pixel values in images

- Feature sets in datasets

Each data point in a machine learning model can be represented as a vector containing several values.

3. Matrices

A matrix is a rectangular arrangement of numbers organized into rows and columns.

Example matrix:

| 2 3 |

| 1 4 |

Matrices are extremely important in AI because they allow computers to process large amounts of data simultaneously. Many operations in machine learning involve multiplying matrices together to transform data.

Matrices are used in AI for:

- Storing datasets

- Performing transformations

- Calculating neural network outputs

- Image processing operations

There are several types of matrices including:

- Row matrix

- Column matrix

- Square matrix

- Diagonal matrix

- Symmetric matrix

These different matrix types are used depending on the mathematical problem being solved.

4. Matrix Operations

Matrix operations allow mathematical manipulation of matrices. Some of the most common matrix operations include:

Matrix Addition

Two matrices can be added if they have the same dimensions.

Example:

A = |1 2|

|3 4|

B = |5 6|

|7 8|

A + B = |6 8|

|10 12|

Matrix Multiplication

Matrix multiplication is one of the most important operations in AI systems.

If matrix A is multiplied by matrix B, the resulting matrix is obtained by multiplying rows by columns.

Matrix multiplication is used extensively in neural networks, where inputs are multiplied by weight matrices to generate predictions.

5. Linear Transformations

A linear transformation is a mathematical operation that transforms vectors while preserving the structure of the data.

A transformation can change:

- Scale

- Rotation

- Direction

- Position

Linear transformations are important in AI because they allow machine learning models to convert raw data into useful representations for learning patterns.

For example:

A transformation function may convert image pixel values into features that help an AI system recognize objects.

6. Activation Functions and ReLU

In deep learning, linear algebra operations are often combined with activation functions.

One common activation function is ReLU (Rectified Linear Unit).

ReLU works as follows:

- If the input value is positive → return the value

- If the input value is negative → return 0

This function helps neural networks learn complex patterns and improves training efficiency.

7. Importance of Linear Algebra in AI

Linear algebra is essential in AI because it allows systems to process high-dimensional data efficiently. Some key areas where linear algebra is used include:

- Neural networks

- Machine learning algorithms

- Computer vision

- Natural language processing

- Data analytics

Without linear algebra, it would be extremely difficult for computers to process the large datasets required for modern AI systems.

1. Extended Examples: Basic Mathematics in AI

Example 1: Operator Precedence in Programming

Expression:

8+4×328 + 4 \times 3^2

Using PEMDAS/BODMAS:

-

Exponent → 32=93^2 = 9

-

Multiplication → 4×9=364 × 9 = 36

-

Addition → 8+36=448 + 36 = 44

Final Answer:

4444

Example 2: Integer Division in Programming

In Python or C-style languages:

Result:

15÷4=315 ÷ 4 = 3

The decimal part is discarded.

Real AI example:

When dividing a dataset into batches of training data, integer division determines how many full batches exist.

Example 3: Modulus in Real Applications

Example:

17mod 517 \mod 5

Step:

17 ÷ 5 = 3 remainder 2

Result:

17mod 5=217 \mod 5 = 2

AI Application:

The modulus operator is used for:

- cyclic operations

- hashing functions

- alternating training samples

Example:

Used to process even indexed data samples.

2. Extended Examples: Linear Algebra in AI

Linear algebra is the foundation of machine learning models.

Example 1: Vector Representation in Machine Learning

A dataset representing a house:

| Feature | Value |

|---|---|

| Bedrooms | 3 |

| Bathrooms | 2 |

| Size (m²) | 150 |

Vector representation:

v=[3,2,150]v = [3,2,150]

AI uses vectors like this to train models.

Example 2: Matrix Representation

Suppose 3 houses:

X=[32150432002190]X = \begin{bmatrix} 3 & 2 & 150 \\ 4 & 3 & 200 \\ 2 & 1 & 90 \end{bmatrix}

Each row = observation

Each column = feature

Machine learning algorithms operate on matrices like this.

Example 3: Linear Transformation

Matrix transformation:

T(x,y)=[2002]T(x,y) = \begin{bmatrix} 2 & 0 \\ 0 & 2 \end{bmatrix}

Applied to vector:

(3,4)(3,4)

Result:

(6,8)(6,8)

This transformation scales the vector by 2.

Used in:

- neural networks

- graphics

- dimensionality reduction

3. Extended Examples: Binary Systems

Computers only understand binary numbers (0 and 1).

Example 1: Binary to Decimal Conversion

Binary:

1010110101

Calculation:

(1×24)+(0×23)+(1×22)+(0×21)+(1×20)(1×2^4)+(0×2^3)+(1×2^2)+(0×2^1)+(1×2^0) 16+0+4+0+116+0+4+0+1

Result:

2121

Example 2: Binary Addition

Add:

+0101

Steps:

1+0 = 1

0+1 = 1

1+0 = 1

Result:

4. Extended Examples: Scientific Notation

Used in AI datasets and computing where numbers can be very large.

Example 1: Large Number

3,400,000,0003,400,000,000

Scientific notation:

3.4×1093.4 × 10^9

Example 2: Small Number

0.000000450.00000045

Scientific notation:

4.5×10−74.5 × 10^{-7}

5. Extended Examples: Cartesian Coordinates

Used in:

- graphics

- robotics

- AI navigation systems

Example 1: Plotting a Point

Point:

(3,−2)(3,-2)

Interpretation:

- move 3 units right

- move 2 units down

Location: Quadrant IV

Example 2: Distance Between Two Points

Points:

A(2,3)

B(6,7)

Distance formula:

d=(x2−x1)2+(y2−y1)2d=\sqrt{(x_2-x_1)^2+(y_2-y_1)^2} d=(6−2)2+(7−3)2d=\sqrt{(6-2)^2+(7-3)^2} d=16+16d=\sqrt{16+16} d=32d=\sqrt{32} d≈5.66d ≈ 5.66

Used in:

- clustering algorithms

- recommendation systems

6. Extended Examples: Pythagorean Theorem

Formula:

a2+b2=c2a^2+b^2=c^2

Example 1

Triangle sides:

a = 5

b = 12

c2=52+122c^2 = 5^2 + 12^2 c2=25+144c^2 = 25 + 144 c2=169c^2 = 169 c=13c = 13

This is a Pythagorean triple.

Example 2: AI Robot Navigation

Robot moves:

- 6 meters east

- 8 meters north

Distance traveled directly:

62+82\sqrt{6^2 + 8^2} 36+64\sqrt{36 + 64} 100=10\sqrt{100} = 10

Robot traveled 10 meters diagonally.

7. Extended Examples: Increments in Programming

Increment:

Example:

x++

New value:

Loop example:

Output:

1

2

3

4

8. Extended Examples: Probability in AI

Probability formula:

P(A)=Number of favorable outcomesTotal outcomesP(A) = \frac{\text{Number of favorable outcomes}}{\text{Total outcomes}}

Example 1: Simple Probability

Probability of rolling a 4 on a die:

P(4)=16P(4) = \frac{1}{6}

Example 2: Spam Detection

If:

- 30 emails are spam

- 70 emails are normal

Total emails = 100

Probability email is spam:

P(spam)=30100=0.3P(spam) = \frac{30}{100} = 0.3

9. Extended Examples: Statistics in Machine Learning

Statistics helps AI:

- understand data patterns

- evaluate predictions

Example: Mean (Average)

Dataset:

Mean:

4+7+9+104\frac{4+7+9+10}{4} =304= \frac{30}{4} =7.5= 7.5

Example: Standard Deviation

Shows how spread out data is.

Small deviation → data close together

Large deviation → data widely spread

Important for:

- anomaly detection

- predictive models

10. Advanced AI Example Combining Concepts

Imagine an AI self-driving car system.

Mathematics used:

| Concept | AI Use |

|---|---|

| Vectors | Represent object positions |

| Matrices | Transform images |

| Binary | Computer processing |

| Probability | Predict pedestrian movement |

| Statistics | Analyze driving data |

Lesson Summary

In this lesson, we explored the importance of linear algebra in artificial intelligence. We discussed vectors, matrices, matrix operations, linear transformations, and activation functions such as ReLU. These mathematical tools form the foundation of machine learning algorithms and neural network models. Understanding linear algebra helps learners grasp how AI systems represent data, perform calculations, and learn from large datasets.